Election season is fast approaching so you can be sure a plethora of polls will soon be adding to the mayhem. Polls educate us in two ways. They tell us what we, or at least the population being polled, think. And, in a more Orwellian sense, they tell us what we should think. Polls are used to guide how the nation is governed. For example, did you know that the unemployment rate is determined from a poll, called the Current Population Survey? Polls are important, so we need to be enlightened consumers of poll results lest we come to “love Big Brother.”

Election season is fast approaching so you can be sure a plethora of polls will soon be adding to the mayhem. Polls educate us in two ways. They tell us what we, or at least the population being polled, think. And, in a more Orwellian sense, they tell us what we should think. Polls are used to guide how the nation is governed. For example, did you know that the unemployment rate is determined from a poll, called the Current Population Survey? Polls are important, so we need to be enlightened consumers of poll results lest we come to “love Big Brother.”

The growth of polling has been exponential, following the evolution of the computer and statistical software. Before 1990, the Gallup Organization was pretty much the only organization conducting presidential approval polls. Now, there are several dozen. On average, there were only one or two presidential approval polls conducted per month. Within a decade, that number had increased to more than a dozen. These pollsters don’t just ask about Presidential approval, either. Polls are conducted on every issue of real importance and most of the issues of contrived importance. Many of these polls are repeated to look for changes in opinions over time, between locations, and for different demographics. And that’s just political polls. There has been an even faster increase in polling for marketing, product development, and other business applications.

So to be an educated consumer of poll information, the first thing you have to recognize is which polls should be taken seriously. Forget internet polls. Forget polls conducted in the street by someone carrying a microphone. Forget polls conducted by politicians or special-interest groups. Forget polls not conducted by a trained pollster with a reputation to protect.

For the polls that remain, consider these four factors:

- Difference between the choices

- Margin of error

- Sampling error

- Measurement error

Here’s what to look for.

Difference between Choices

The percent difference between the choices on a survey is often the only thing people look at, with good reason. It is often the only thing that gets reported. Reputable pollsters will always report their sample size, their methods, and even their poll questions, but that doesn’t mean all the news agencies, bloggers, and other people who cite the information will do the same. But the percent difference between the choices means nothing without also knowing the margin-of-error. Remember this. For any poll question involving two choices, such as Option A versus Option B, the largest margin of error will be near a 50%–50% split. Unfortunately, that’s where the difference is most interesting, so you really need to know something about the actual margin of error.

Margin-of-Error

You might have seen surveys report that the percent difference between the choices for a question has a margin-of-error of plus-or-minus some number. In fact, the margin-of-error describes a confidence interval. If survey respondents selected Option A 60% of the time with a margin-of-error of 4%, the actual percentage in the sampled population would be 60% ± 4%, meaning between 56% and 64%, with some level of confidence, usually 95%.

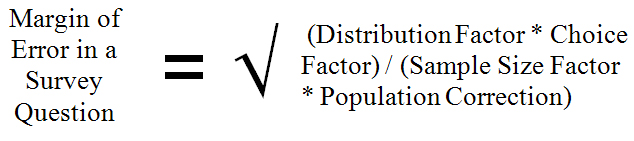

For a simple random sample from a surveyed population, the margin-of-error is equal to the square root of a Distribution Factor times a Choice Factor divided by a Sample Size Factor times a Population Correction.

- Distribution Factor is the square of the two-sided t-value based on the number of survey respondents and the desired confidence level. The greater the confidence the larger the t-value and the wider the margin-of-error.

- Choice Factor is the percentage for Option A times the percentage for Option B. That’s why the largest margin of error will always be near a 50%–50% split (e.g., 50% times 50% will always be greater than any other percentage split, like 90% times 10%).

- Sample Size Factor is the number of people surveyed. The more people you survey, the smaller the margin of error.

- Population Correction is an adjustment made to account for how much of a population is being sampled. If you sample a large percentage of the population, the margin-of-error will be smaller. The population correction ranges from 1 to about 2. It is calculated by the reciprocal of one plus the quantity the number of people surveyed (n) minus one divided by the number of people in the population (N), or in mathematical notation, 1/(1+(n-1/N)).

So the entire equation for the margin-of-error is:

Or in mathematical notation:

This formula can be simplified by making a few assumptions.

- If the population size (N) is large compared to the sample size (n), you can ignore the Population Correction. What’s large, you ask? A good rule of thumb is to use the correction if the sample size is more than 5% of the population size. If you’re conducting a census, a survey of all individuals in a population, you can’t make this assumption.

- Unless you expect a different result, you can assume the percentages for respondent choices will be about 50%–50%. This will provide the maximum estimate for the margin-of-error, ignoring other factors.

- Ignore the sample size in the Distribution Factor and use a z-score instead of a t-score. For a two-sided margin-of-error having 95% confidence, the z-score would be 1.96.

- The Distribution Factor (1.962) times the Choice Factor (50%2) equals 0.96

These assumptions reduce the equation for the margin-of-error to 1/√n. What could be simpler? Here’s a chart to illustrate the relationship between the number of responses and the margin-of-error. The margin-of-error gets smaller with an increase in the number of respondents, but the decrease in the error becomes smaller as the number of responses increases. Most pollsters don’t use more than about 1,200 responses simply because the cost of obtaining more responses isn’t worth the small reduction in the margin-of-error. Don’t worry about the Current Population Survey, though. The Bureau of Labor Statistics polls about 110,000 people every month so their margin-of-error is less than half of a percentage point.

Always look for the margin-of-error to be reported. If it’s not, look for the number of survey responses and use the chart or the equation to estimate the margin of error. Here’s a good point of reference. For 1,000 responses, the margin-of-error will be about ±3% for 95% confidence. So if a political poll indicates that your candidate is behind by two points, don’t panic; the election is still too close to call.

Sampling Error

Sampling error in a survey involves how respondents are selected. You almost never see this information reported about a survey for several reasons. First, it’s boring unless you’re really into the mechanics of surveys. Second, some pollsters consider it a trade secret that they don’t want their competition to know about especially if they’re using some innovative technique to minimize extraneous variation. Third, pollsters don’t want everyone to know exactly what they did because then it might become easy to find holes in the analysis.

Ideally, potential respondents would be selected randomly from the entire population of respondents. But you never know who all the individuals are in a population, so have to use a frame to access the individuals who are appropriate for your survey. A frame might be a telephone book, voter registration rolls, or a top-secret list purchased from companies who create lists for pollsters. Even a frame can be problematical. For instance, to survey voter preferences a list of registered voters would be better than a telephone book because not everyone is registered to vote. But even a list of registered voters would not indicate who will actually be voting on Election Day. Bad weather might keep some people at home while voter assistance drives might increase turnout of a certain demographic.

A famous example of a sampling error occurred in 1948 when pollsters conducted a telephone survey and predicted that Thomas E. Dewey would defeat Harry S. Truman. At the time, telephones were a luxury owned primarily by the wealthy, who supported Dewey. When voters, both rich and poor, went to the polls, it was Truman who was victorious. This may seem obvious in retrospect but there’s an analogous issue today. When cell phones were introduced, the numbers were not compiled into lists, so cell phone users, primarily younger individuals, were under sampled in surveys conducted over land lines.

Another issue is that survey respondents need to be selected randomly from a frame by the pollster to ensure that bias is not introduced into the study. Open-invitation internet surveys fail to meet this requirement, so you can never be sure if the survey has been biased by freepers. Likewise, if someone with a microphone approaches you on the street it’s more likely to be a late night talk show host than a legitimate pollster.

Measurement Error

Measurement error in a survey usually involves either the content of a survey or the implementation of the survey. You might get to see the survey questions but you’ll never be able to assess the validity of the way a survey is conducted.

Content involves what is asked and how the question is worded. For example, you might be asked “what is the most important issue facing our country” with the possible responses being flag burning, abortion, social security, Congressional term limits, or earmarks. Forget unemployment, the economy, wars, education, the environment, and everything else. You’re given limited choices so that other issues can be reported to be less important.

Politicians are notorious for asking poll questions in ways that will support their agenda. They also use polls not to collect information but to dissemination information about themselves or disinformation about their opponents. This is called push polling.

The last thing to think about for a poll is how the responses were collected. For autonomous surveys, look at how the questions are worded. For direct response surveys, even if you could get a copy of the script used to ask the questions, there’s no telling what was actually said, what body language was used, and so on. Professional pollsters may create the surveys but they are often implemented by minimally trained individuals.

Read more about using statistics at the Stats with Cats blog. Join other fans at the Stats with Cats Facebook group and the Stats with Cats Facebook page. Order Stats with Cats: The Domesticated Guide to Statistics, Models, Graphs, and Other Breeds of Data Analysis at Wheatmark, amazon.com, barnesandnoble.com, or other online booksellers.

Pingback: Random Thoughts, December 2015 – January 2016 | Random TerraBytes

Pingback: Searching for Answers | Stats With Cats Blog

Pingback: 35 Ways Data Go Bad | Stats With Cats Blog

Pingback: How to Tell if a Political Poll is Legitimate | Stats With Cats Blog