1. Where’s the Beef?

In a way, the worst flaw a data analysis can have is no analysis at all. Instead, you get data lists, sorts and queries, and maybe some simple descriptive statistics but nothing that addresses objectives, answers questions, or tells a story. If that’s all you want, that’s fine. But a data report is not a data analysis. Reports provide information; analyses provide knowledge. It’s like with your bank account. Sometimes you just want a quick report of your balance. That information has to be readily available whenever you might need it and both you and the bank have to be working with exactly the same data. If you want to assess patterns in your spending, though, you have to conduct an analysis. Say you want to figure out how much more you’re spending on commuting over the past five years, you’ll have to compile the data and scrub out anomalies, like the cross-country driving you did on vacation, to look for patterns. Analyses involve much more than a glance (https://statswithcats.wordpress.com/2010/08/22/the-five-pursuits-you-meet-in-statistics/). They take time, sometimes, a lot of time. To make sure you’re getting what you need, look beyond the data tables for models, findings, conclusions, and recommendations. If they’re not there, you didn’t get an analysis.

2. Phantom Populations

If there were to be a fatal flaw in an analysis, it would probably involve how well the samples represent the population. Sometimes data analysts don’t give enough thought to the populations they want to analyze. They use observations to make inferences to a population that doesn’t exist. Populations must be based on some identifiable commonalities that would meaningfully affect some characteristic. A group of anomalies would not be a population. Opinion polls sometimes suffer from phantom populations. Say you surveyed people wearing red shirts. Could you then generalize to everyone who wears red shirts? Canadian researchers found one such phantom population when they tried to create a control group of men who had not been exposed to pornography (http://www.telegraph.co.uk/relationships/6709646/All-men-watch-porn-scientists-find.html). Make sure the population being analyzed is more than an illusion.

3. Wow, Sham Samples

Sometimes the population is real and well defined, but the samples don’t represent it adequately. This is a common criticism of opinion polls, especially election polls. It was the reason cited for why exit polls during the presidential election of 2004 indicated that John Kerry won many precincts that ballot counts later awarded to George Bush. Medical and sociological studies may have sham samples because it is often difficult to select subjects to match some target demographic. Likewise, environmental studies can suffer from inconsistencies between soil types or aquifers. To identify sham samples, look for three things: (1) a clear definition of a real population, (2) a description of how samples were selected so that they represent the population, and (3) information about any changes that occurred during sampling, such as subjects being dropped or samples moved.

4. Enough Is Enough

The number of samples always seems to be an issue in statistical studies (https://statswithcats.wordpress.com/2010/07/17/purrfect-resolution/). For too few samples, question confidence and power; for too many samples, question meaningfulness (https://statswithcats.wordpress.com/2010/07/26/samples-and-potato-chips/). Usually analysts are ready for this question but beware if they cite the old familiar fable about using 30 samples (https://statswithcats.wordpress.com/2010/07/11/30-samples-standard-suggestion-or-superstition/). It may indicate their understanding of statistics is not as formidable as you supposed. Also, if they appear to be using a reasonable number of samples but then break out categories for further analysis, make sure each category has an appropriate number of samples for the analysis they are doing.

5. Indulging Variance

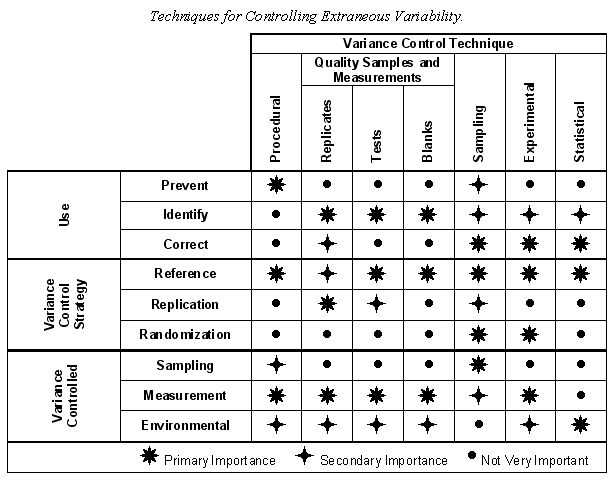

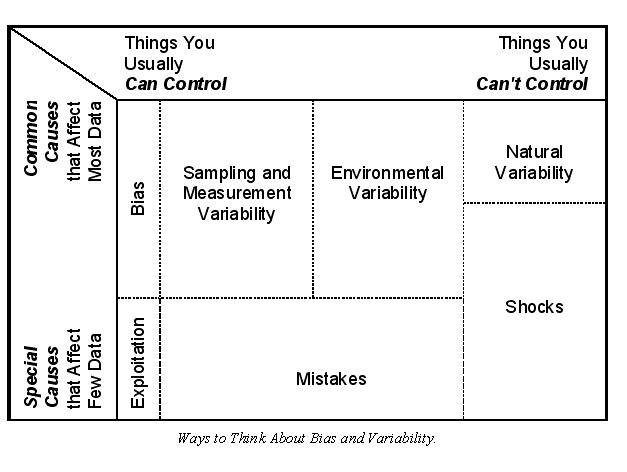

Most people don’t appreciate variance. They don’t even know it’s there (https://statswithcats.wordpress.com/2010/08/01/there%E2%80%99s-something-about-variance/). If their candidate for office is up by two percentage points in a poll, they figure the election is in the bag. Even professionals like scientists, engineers, and doctors don’t want to deal with it. They ignore it whenever they can and just address the average or most common case. Business people talk about variances all the time, only they mean differences rather than statistical dispersion. Baseball players thrive on variance. Where else can you have two failures out of every three chances and still be considered a star? Data analysts have to understand variance and address it at every step of a project. Look for how variance will be controlled in study plans (https://statswithcats.wordpress.com/2010/09/05/the-heart-and-soul-of-variance-control/

https://statswithcats.wordpress.com/2010/09/19/it%E2%80%99s-all-in-the-technique/). Look for variance to be reported with results. And most importantly, look for some assessment of how uncertainty affects any decisions made from the analysis.

6. Madness to the Methods

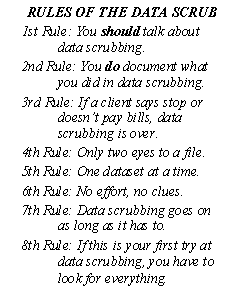

NASA uses checklists to ensure that every astronaut does things correctly, completely, and consistently. Make sure the analysis you are doing or reviewing takes the same care. If there are multiple data collection points or times, be sure there is a standard protocol or script for generating the data. Be especially concerned if the data are collected over multiple years. Better and cheaper methods and equipment are continuously being developed so be sure they are compatible (https://statswithcats.wordpress.com/2010/09/12/the-measure-of-a-measure/). Be sure the data had been scrubbed adequately of errors, censored and missing data, replicates, and outliers (https://statswithcats.wordpress.com/2010/10/17/the-data-scrub-3/). Finally, be sure the data analysis method is appropriate for the numbers and natures of the variables and samples (https://statswithcats.wordpress.com/2010/08/27/the-right-tool-for-the-job/).

7. Torrents of Tests

If a statistical test is conducted in a study, false positives and false negatives can be controlled, or at least, evaluated. But if there are many tests, you can bet there will be false results just because of Mother Nature’s sense of humor. In groundwater testing, for example, there may be a test for every combination of well, analytes, and sampling rounds, resulting in literally hundreds of tests. There are strategies for dealing with this type of situation, such as hierarchical testing and the use of special tests (look for the term Bonferroni). Be careful of bad decisions based on a small proportion of the tests being (apparently) significant.

8. Significant Insignificance and Insignificant Significance

Here’s where you have to use your gut feel. If a test is statistically significant and you don’t believe it should be, ask about the confidence level and whether the size of the difference is meaningful. Just as correlation doesn’t necessarily imply causation, significance doesn’t

necessarily imply meaningfulness. If something is not statistically significant and you believe it should be, ask about the power of the test and the size of the difference the test should have detected. Be sure the study looked at violations of assumptions (https://statswithcats.wordpress.com/2010/10/03/assuming-the-worst/). Also, look for what’s not there. Sometimes studies do not report nonsignificant results. Such results could be exactly what you’re looking for.

9. Extrapolation Intoxication

Make sure the data spans the parts of the variable scales about which you want to make predictions. If a study collects test data at ambient indoor temperature, beware of predictions made under freezing conditions. Likewise, be careful of tests on rabbits that are extrapolated to humans, maps showing information beyond the limits observed, surveys of one demographic extrapolated to another, and the like. Perhaps the only example of extrapolation that is even grudgingly accepted by statisticians is time-series analysis (https://statswithcats.wordpress.com/2010/08/15/time-is-on-my-side/). You have to extrapolate to predict the future. The issue is how far

into the future is reasonable, which will depend on the degree of autocorrelation, the stability of the data, and the model.

10. Misdirected Models

Models are great tools for helping you understand your data (https://statswithcats.wordpress.com/2010/08/08/the-zen-of-modeling/). Statistical models are based on data. Deterministic models, though, rely on theories, mainly the theories believed by the researcher using the model. But deterministic models are no better than the theories on which they are based. Misdirected models involve researchers creating models based on biased or mistaken theories, and then using the model to explain data or observed phenomena in a way that fits the researchers preconceived notions. This flaw is more common in areas that tend to be more observational than experimental.

Read more about using statistics at the Stats with Cats blog. Join other fans at the Stats with Cats Facebook group and the Stats with Cats Facebook page. Order Stats with Cats: The Domesticated Guide to Statistics, Models, Graphs, and Other Breeds of Data Analysis at Wheatmark, amazon.com, barnesandnoble.com, or other online booksellers.